Seems bad when the Supreme Court is like “gosh, this case from an obviously profoundly immoral guy acting in bad faith really tests our ability to rule in cases of law that presume good faith on the part of the most powerful person in the world. We’d better punt.”

It was a whirlwind couple of days in multiple ways. I finished my Seattle coffee jaunt at Cascade Coffee Works; two days later, I’m making cappuccino in my own kitchen.

I really enjoyed visiting MOHAI, the Museum of History and Industry in Seattle. It’s a beautiful space with a lot of clever, intricate hands-on exhibits, and a coffee shop to go with it (continuing my South Lake Union cappuccino tour).

I’ve always had a thing for traffic mirror selfies, so absolutely, 100% couldn’t resist a whole wall of them.

South Lake Union coffee tour continued at Espresso Vivace this morning. Beautiful shop and delicious cappuccino.

Far from home, havjng arrived in Seattle after a two-day trip. I haven’t been in this part of the country for many years; it’s quite something to be back.

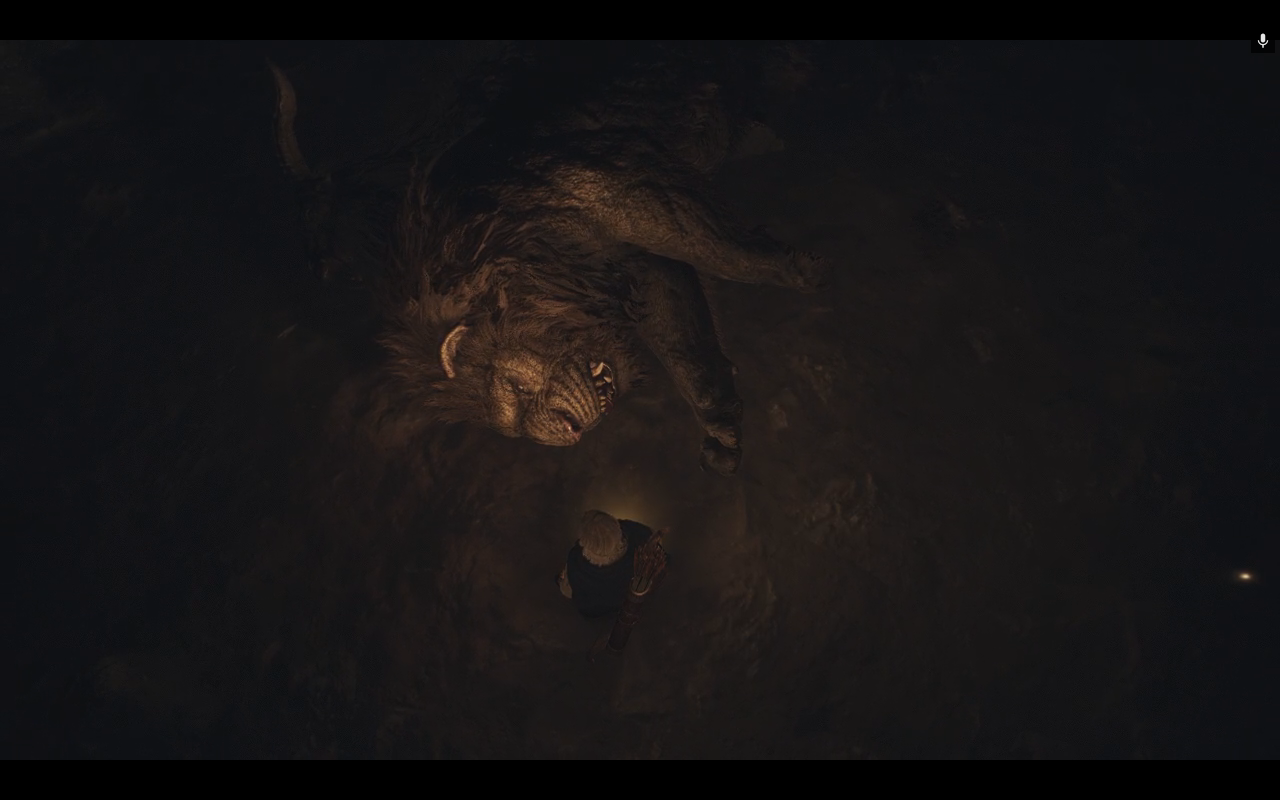

Last night’s Dragon’s Dogma 2 adventure: got lost in a cave system and stumbled into a gryphon that my party fought for what felt like hours, and finally defeated. It just felt epic.

It’s a big Dragons Dogma 2 weekend around here. The game is great, and has a very good photo mode, too.

Having nursed a finicky NUC as my Roon server for four years and been this close to picking up a Mac Mini to do the job but unsure if I really wanted another whole computer, I’m finding the new Nucleus One to be pretty appealing.