I’ve been thinking some about “expressive” work with code and why I think it’s useful and important, and I wrote up a little blog post about it, over at my Datablog: What do we mean when we say ‘code is expressive,’ anyway?.

Most impactful personal technology of 2023? For me, good insoles on the recommendation of a podiatrist was a real game-changer.

Wondering if there’s still time to get really into, like, tmux before going back to work.

Don’t mind me; I’m just testing out enabling like post types.

Here’s a post of my own that I like, about learning some python in a comfortable place.

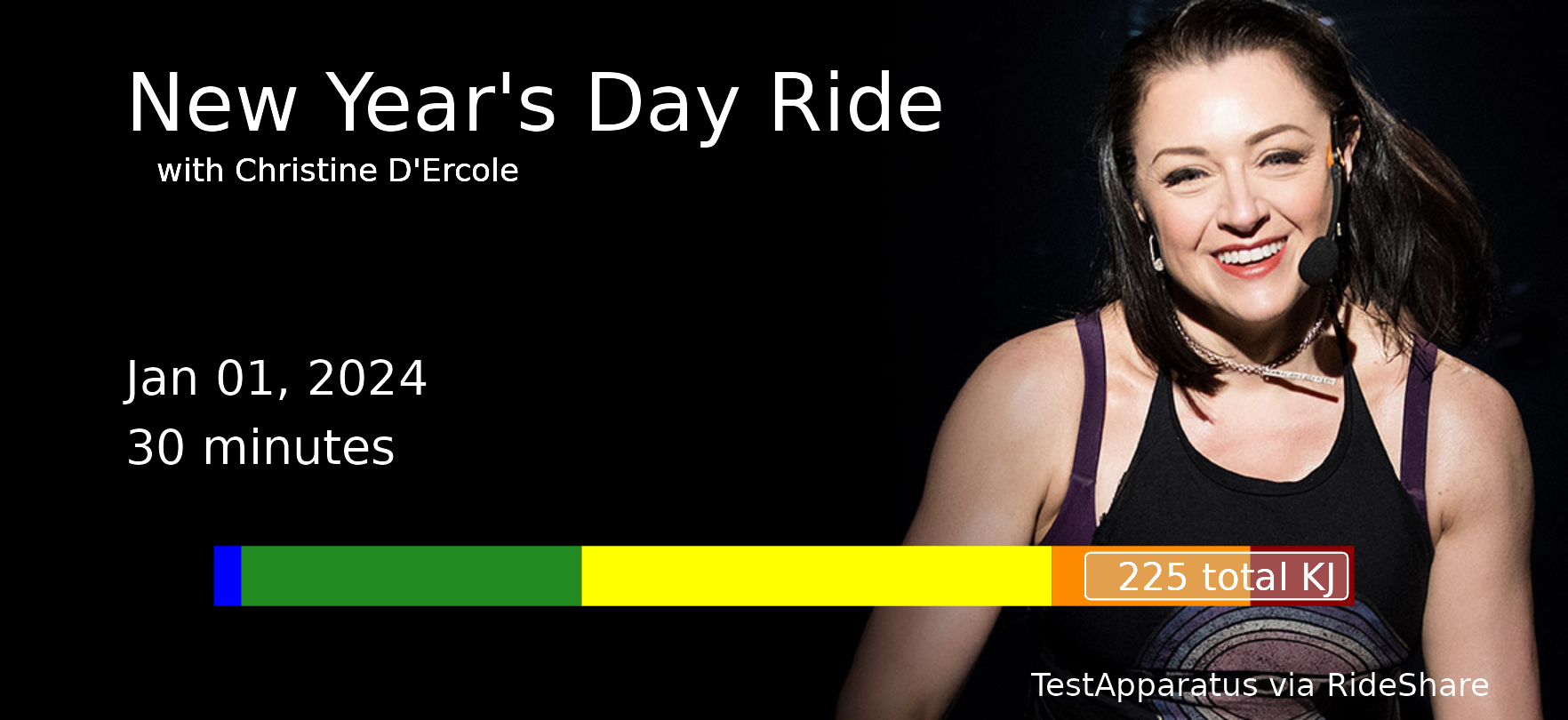

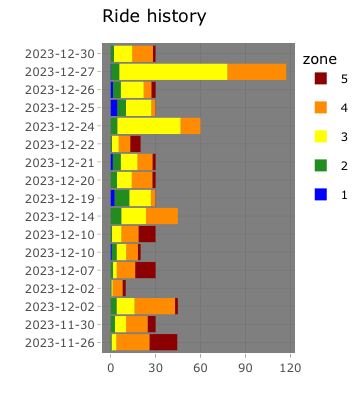

I did this two-hour power zone ride the other day – whoooo! – and now all the other rides in my recent history plot look like lil tiny guys.

My personal OneDrive account is connected to my Xbox, and for years and years the only thing in it was game clips and screenshots. So once in a while I get an “on this date last year” sort of email from Microsoft, and it’s always full of nothing but Destiny 2 clips, mostly PVP and mostly Iron Banner. (Some of them are bangers! Every once in a while I’m good at this game.)

In other blog news, I’ve swapped in webmention support for links from Mastodon, at least partially. Reposts aren’t quite displayed as I’d like, but likes and replies should be working. I had to remember/rediscover a couple of bits of blog plumbing, which makes me sort of want to revise my whole setup here. BUT. I don’t think I’ll go quite that far.

I really like all the stuff on Robb Knight’s website, and The Web is Fantastic is a super example of why. In this slow span of days before New Year’s I love having a list of inspiring Things To Do On the Web.